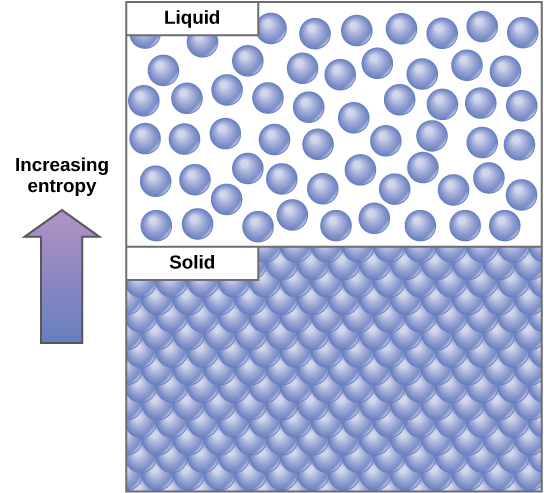

In classical thermodynamics, entropy ( S) is an extensive state variable (i.e., a state variable that changes proportionally as the size of the system changes, and is thus additive for subsystems,) which describes the relationship between the heat flow ( δ Q ) and the temperature ( T) of a system. Mathematically denoted, the relationship is d S = δ Q / T. This formalism of entropy and Clausius’s statement of the second law of thermodynamics led to the interpretation of entropy as a measure of unavailability (i.e., entropy as a measure of the energy dispersed as heat, which cannot perform work at a given temperature). It is also this formalism which has allowed for entropy production as a measure of spontaneity, unidirectionality, and dissipation. It is defined by the equation: Where G is the Gibbs free energy, H is the enthalpy, T is the temperature, and S is the entropy. This formalism has proven particularly useful in biology for measuring the energy dissipation and thermodynamic efficiency in biological systems including cells, organisms, and ecosystems. The Gibbs free energy is a chemical potential energy in a substance. The direct relationship of entropy to temperature and heat allows for the precise calculations of entropy production in systems via calorimetry and spectroscopy. These methods have proven quite valuable as a means to collect data on energetics and entropy production in biological systems, and improvements in resolution and accuracy in both technologies continue to advance bioenergetics research. The thermodynamic entropy function proposed by Clausius was extended to the field of statistical mechanics by Boltzmann with the introduction of statistical entropy. In Boltzmann’s formalism, entropy is a measure of the number of possible microscopic states (or microstates) of a system in thermodynamic equilibrium, consistent with its macroscopic thermodynamic properties (or macrostate). Thus, the popular expression of entropy as S = k B l n Ω, where Ω is the number of microstates consistent with the equilibrium macrostate and k B is a constant, which serves to keep entropy in the units of heat capacity (i.e., Joules∙Kelvin −1).

Gibbs extended Boltzmann’s analysis of a single multiparticle system to the analysis of an ensemble of infinitely many copies of the same system, demonstrating that the entropy of a system is related to the probability of being in a given microstate during the system’s fluctuations ( p i), and resulting in the well-known Gibbs entropy equation:Īs the Gibbs entropy approaches the Clausius entropy in the thermodynamic limit, this interesting link between Shannon’s entropy and thermodynamic entropy has often led to misinterpretations of the second law of thermodynamics in biological systems (e.g., the postulation of macroscopic second laws acting at the scale of organisms and ecosystems). However, it is this same link that has made possible the idea of information engines (e.g., ) and has allowed for use of entropy concepts in many systems far removed from the heat engine (e.g., chemical systems, electrical systems, biological systems).

In biology, perhaps the most well-known application of entropy is the use of Shannon’s entropy as a measure of diversity. This form of energy is called potential energy because it is possible for that object to do work in a given state.More precisely, the Shannon entropy of a biological community describes the distribution of individuals (these could be individual biomolecules, genes, cells, organism, or populations) into distinct states (these states could be different types of molecules, types of cells, species of organism, etc.). What if that same motionless wrecking ball is lifted two stories above a car with a crane? If the suspended wrecking ball is not moving, is there energy associated with it? Yes, the wrecking ball has energy because the wrecking ball has the potential to do work.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed